AI Traffic Has Big Implications for Towers and Edge Infrastructure

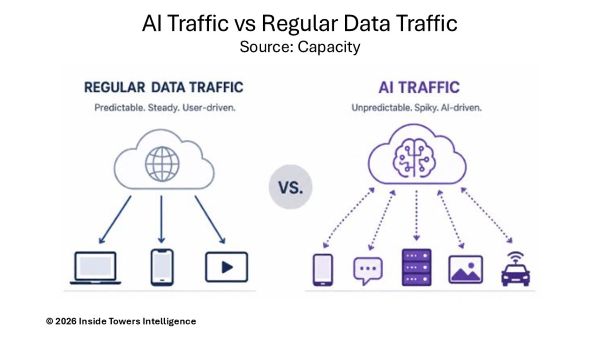

In the age of AI, one might conclude that traffic generated by AI inference and applications will be the same as regular data traffic, just more of it and at faster speeds. Turns out, there are some significant differences in the nature and volume of traffic generated by AI workloads compared to traditional data processing, according to Capacity.

Those differences have real implications for how network capacity, needed between GPUs in a data center and between data centers, and from data centers to edge infrastructure and end users, is planned and provisioned, and ultimately, priced. Consider AI traffic being transported over broadband and wireless networks in two distinct categories, each with unique transmission characteristics.

First is AI Training traffic that is generated when large learning models (LLMs) are being assembled or updated. Such LLM data tends to be produced in bulk. Associated data transfers are scheduled and large data volumes move in a parallel manner between thousands of GPUs, either within a single data center or between a small number of connected facilities.

Capacity points out that LLM latency tolerance is relatively high, but the sheer volume and the east-west nature of the traffic flow (that is, moving between servers) puts pressure on internal network fabric and interconnection in ways that traditional north-south enterprise traffic (between a server and an end user) does not.

AI inference traffic is generated when a trained LLM is put to use, for example, when someone submits a prompt, runs an image through a recognition model, or triggers an AI-powered task. Unlike training traffic, AI inference occurs in real time, varies unpredictably in volume, and is highly sensitive to latency. It also happens close to the user, whether on fixed devices in a specific location or on mobile devices as users move. Some digital infrastructure operators estimate that as much as 80 percent of AI inference will occur on mobile devices.

Compared with AI traffic, conventional internet traffic such as video streaming, web browsing, and file transfers, follow relatively predictable patterns. Like voice traffic before the Internet era, it stays steady during the day, peaks in the evening, and declines overnight. It also tends to grow gradually over time, generally tracking population growth, which makes it easier for network planners to model in populated areas.

AI Inference traffic is less predictable and can surge when a new model launches or an app goes viral. As Inference moves to edge locations, demand also shifts geographically. Because it consists of many small, latency-sensitive requests, rather than large steady transfers, networks must handle it differently at the transport layer.

Training workloads are creating much greater demand for data center interconnections. GPU clusters increasingly require 400G and even 800G fiber links, both within data centers and between them.

Mobile network operators also must balance cost and performance as they deploy new high-band spectrum, decide what cell-site equipment is needed for AI-in-RAN and AI-on-RAN applications, and determine when and where to build new towers. While 10G fiber links to towers are common today, backhaul capacity could rise to 25-50G or higher as AI inference usage grows.

Because the timing and scale remain uncertain, MNO network planners and their tower partners should address these issues sooner rather than later. Forecasters suggest mobile data demand is doubling every two to three years and that AI inference traffic could begin scaling significantly by 2030 and beyond.

Even so, no one knows exactly when that shift will occur, only that it is coming. The fact that hundreds of billions of dollars are committed to new data center investments suggest AI development may be advancing faster than expected.

Network operators that plan ahead and invest early will likely be best prepared for what comes next. Many are already building high-capacity, low-latency interconnects, flexible edge infrastructure, and the ability to add capacity quickly wherever demand emerges.

As a result, the old approach of forecasting traffic growth three years out and building to a fixed target is rapidly becoming outdated.

By John Celentano, Inside Towers Business Editor